Documentation depth

14 technical chapters

Complete thesis structure with methods, controls, and acceptance criteria.

Research Thesis

This document is written as a technical-first research thesis for clinicians, engineering teams, regulatory reviewers, and quality stakeholders. It describes the scientific rationale, sensing pipeline, quality-control doctrine, model governance, validation strategy, risk case, and claim boundaries that govern NoDraw. The framing is ambitious at the access layer and conservative at the clinical claim layer: scale early detection access while preserving explicit uncertainty and confirmatory-care requirements.

Documentation depth

14 technical chapters

Complete thesis structure with methods, controls, and acceptance criteria.

Evidence posture

Inline-cited

Claims are bound to references and release governance artifacts.

Safety doctrine

Pass / Reacquire / Abstain

Outputs are withheld whenever certainty or quality is insufficient.

Claim boundary

Early risk signals

Not a standalone replacement for clinician diagnosis or treatment pathways.

Version: v1.0-thesis

Last reviewed: February 15, 2026

Evidence cadence: Quarterly baseline; immediate update on evidence-tier or safety-policy changes.

Boundary notice

In plain language: Read this path first if you want the core science, limits, and safety logic without reading all 14 chapters in one pass.

What this page proves

What this page does not claim

In plain language: this section shows what is strongest today, where evidence is still developing, and which source supports each claim.

NoDraw is designed for conservative early risk signals with explicit abstain behavior instead of forced output.

Pass/reacquire/abstain controls are implemented before inference to prevent low-integrity captures from being interpreted.

Every release claim must map to a claim-evidence row with thresholds, owners, and boundary language.

Risk acceptance is continuously reassessed using drift signals, incident patterns, and change classes.

Feasibility, analytical validation, and clinical performance are separated to avoid metric over-interpretation.

Clear boundary language and explainable outputs reduce unsafe self-interpretation by end users.

In plain language: use this matrix to separate current supported behavior from emerging evidence and explicit non-claims.

| Status | What it means | Current position | Reader action |

|---|---|---|---|

| Supported now | Backed by defined controls and mapped evidence artifacts. | Capture gating, abstain policy, claim boundaries. | Use as baseline understanding of current product posture. |

| Emerging evidence | Methods and controls are defined, but broader validation expansion is in progress. | Subgroup scaling, external-site robustness, update-class thresholds. | Review chapter open questions before drawing deployment conclusions. |

| Not claimed | Explicitly out of scope or prohibited until evidence posture changes. | Autonomous final diagnosis, universal lab-equivalence claims. | Treat as non-deployable narrative until formal claim map changes. |

In plain language: this is the exact system flow from capture to either output or abstain.

Guided capture

User follows camera guidance for stable ocular acquisition.

If capture is unstable, move to reacquire instructions.

Quality control

QC scores lighting, focus, motion, and signal integrity.

If threshold fails, output is withheld and recapture is required.

Feature extraction

Validated preprocessing transforms are applied with versioned controls.

Transformation mismatches trigger conservative handling and logging.

Model ensemble

Endpoint models produce calibrated probabilities with uncertainty context.

Conflicting or low-confidence behavior triggers arbiter constraints.

Rules + ML arbiter

Deterministic policy rules gate what can be shown and how.

Boundary violations force abstain and escalation messaging.

Abstain or output

User receives either constrained risk signal guidance or explicit abstain notice.

No uncertain output is shown without passing policy requirements.

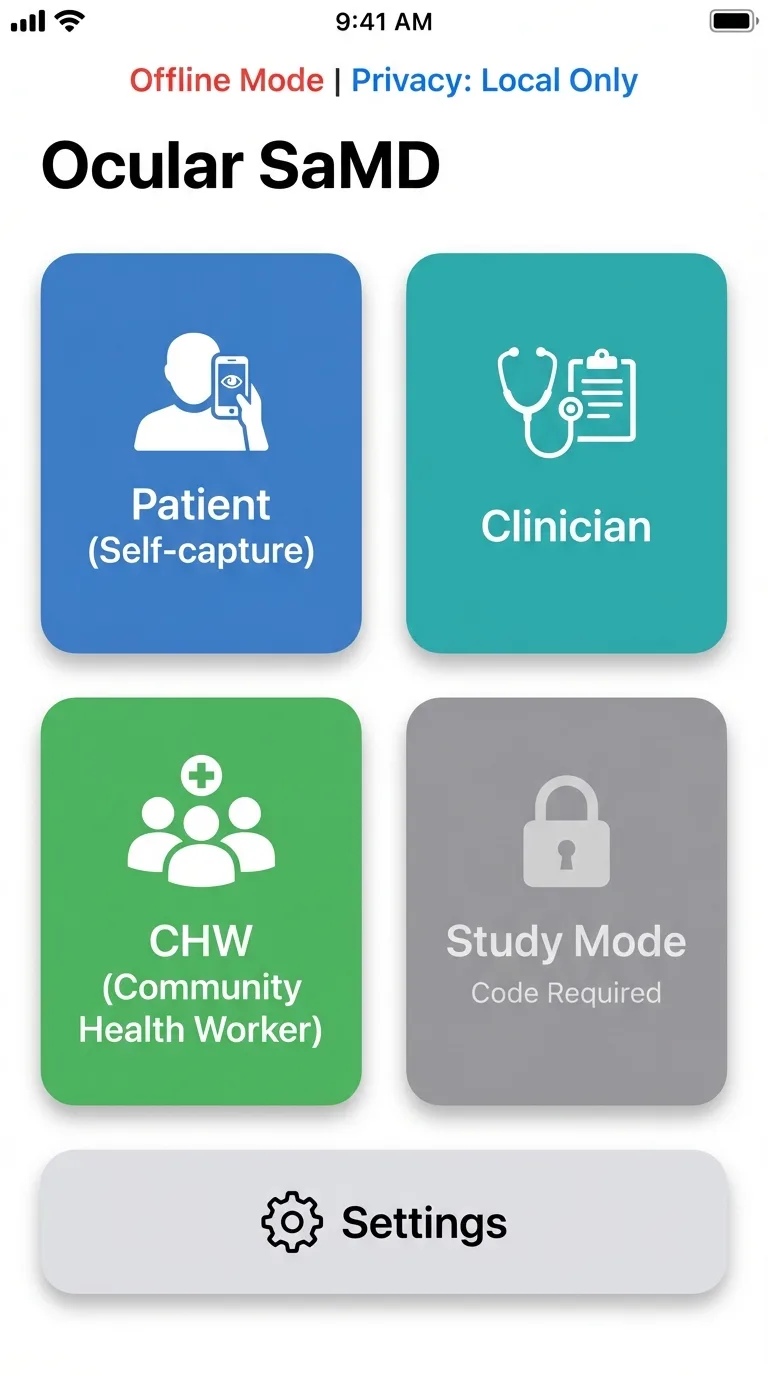

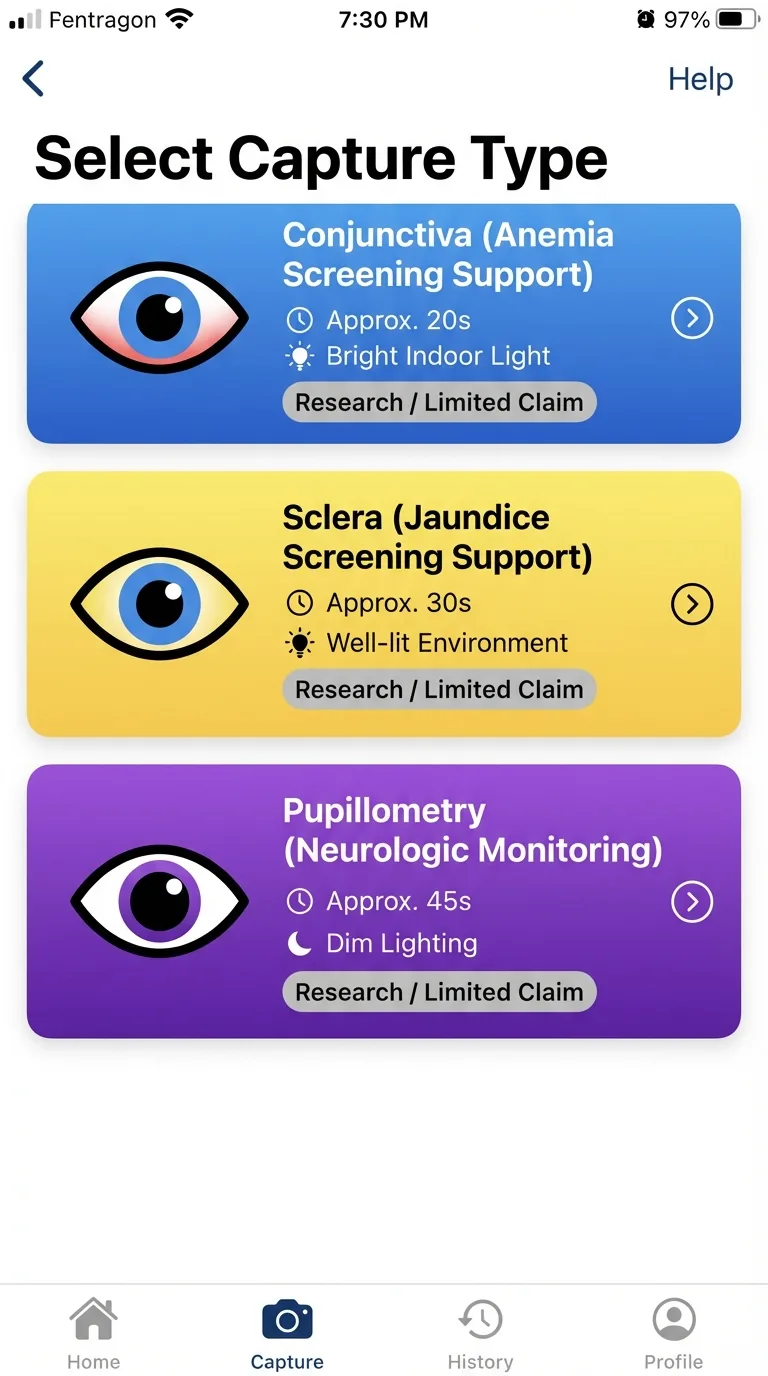

Interface Evidence

These product interfaces illustrate the operational workflow discussed in this thesis: mode selection, quality-gated capture, and role-aware routing.

Select capture type

Choose the right capture path for the biomarker context.

Quality gate HUD

Real-time quality checks gate results and trigger recapture when needed.

Role-aware routing

Different users enter role-specific, privacy-aware workflows.

These are interface illustrations of workflow behavior; validation claims are established in cited study sections.

In plain language: the system is designed for early risk signals and mandatory follow-up routing, not autonomous diagnosis.

Use the complete chapter sequence for architecture, regulatory, validation, risk, and operations depth.

Chapter jump

Chapter 01

NoDraw is presented as a phone-scale diagnostic access thesis: transform smartphone camera signals into quality-gated early risk outputs while preserving strict medical safety boundaries, explicit uncertainty handling, and confirmatory-care routing.

Why this chapter exists

Reader question: What is NoDraw claiming, and what is it not claiming?

Without clear thesis boundaries, readers cannot distinguish strategic vision from release-safe clinical positioning.

Estimated reading time: 7 min

The central thesis is that a smartphone-only ocular biomarker stack can become clinically useful when engineered as a constrained safety system rather than a generic prediction engine. The product promise is intentionally ambitious at the access layer and intentionally conservative at the claim layer: increase screening reach, reduce startup friction, and accelerate triage without claiming definitive diagnosis autonomy. This dual stance is the core design doctrine of the program.

From an evidence perspective, this thesis only holds if each output is mediated by measurable quality controls, reproducible acquisition constraints, and calibrated confidence logic. The practical implication is that the app must be designed to abstain frequently in adverse conditions rather than fabricate certainty. Program value is therefore determined by both what it predicts and what it refuses to predict.

Visionary framing with controlled claims

NoDraw is framed as infrastructure for earlier detection access at population scale. It is not framed as a replacement for clinician-led diagnosis or laboratory confirmation.

Program thesis pillars

Quantitative anchor

Utility = Access Gain x (Clinical Signal Quality) x (Safety Compliance)Program utility is treated as a multiplicative relationship: failures in safety compliance or signal quality collapse practical value regardless of access scale.

| Dimension | Target posture | Operational implication |

|---|---|---|

| Clinical positioning | Early risk signal system | No definitive standalone diagnosis claim |

| Form factor | Smartphone-only | No external optical attachment or specimen kit required |

| Safety behavior | Pass / Reacquire / Abstain | Output withheld when signal quality or certainty is insufficient |

| Deployment model | Request-first with confirmatory routing | No treatment decision automation without clinician confirmation |

Program positioning and release doctrine

Figure: Program thesis and safety doctrine

mermaid

flowchart LR

Vision[Visionary phone-scale access] --> Gate[Quality gate doctrine]

Gate --> Output[Early risk signals]

Output --> Confirm[Confirmatory clinical pathway]High-level doctrine: visionary research framing with conservative medical safety boundaries.

The thesis explicitly assumes that the greatest near-term clinical value of phone-only diagnostics lies in earlier risk visibility rather than endpoint finality. In low-friction screening contexts, the system can reduce the delay between subjective symptom onset and objective triage action, particularly where laboratory access windows are narrow or operationally expensive. This value proposition requires discipline: scale cannot be used to justify lower evidence thresholds. Instead, scale is treated as the multiplier that makes strict safety controls more important, not less important, because any defect in interpretation can propagate rapidly across large populations.

Program leadership is framed around three parallel obligations: scientific coherence, operational control, and communication fidelity. Scientific coherence means feature-to-label logic must remain explainable and testable. Operational control means model and rule changes are release-governed rather than opportunistic. Communication fidelity means public statements must remain synchronized with measurable evidence posture. When one of these obligations drifts, the program enters a reputational and safety debt state. This chapter therefore treats executive language as a technical artifact, because framing errors at the top of the stack often become hidden defects in downstream product behavior and policy decisions.

A notable design principle in this thesis is that abstain behavior is interpreted as a quality success signal, not a conversion failure. In classical consumer UX, withholding output is viewed as friction; in medical software, withholding uncertain output is the ethical center of the product. This redefinition requires both interface and operations alignment: users need understandable abstain messaging, support teams need escalation scripts, and analytics teams need abstain telemetry by device cohort. If abstain behavior is suppressed to improve vanity metrics, the system drifts toward unsafe confidence and breaks the foundational thesis.

The executive scope also commits to longitudinal accountability. A thesis-grade product cannot rely on launch-time evidence alone; it must continuously validate that real-world populations, software updates, and camera pipeline drift have not invalidated prior claims. For this reason, chapter-level acceptance criteria are intentionally operational and version-aware. Each claim is considered provisional until reinforced by post-market stability evidence. This posture preserves ambition while acknowledging epistemic limits in dynamic mobile ecosystems where hardware and firmware conditions can change faster than traditional clinical evidence cycles.

Executive accountability is also expanded around measurable thesis health indicators. The program should track evidence freshness, boundary adherence, abstain appropriateness, and escalation completion quality as leadership metrics, not only conversion or acquisition growth. This avoids a common digital health distortion where business dashboards silently reward aggressive output behavior while safety controls degrade. By defining leadership KPIs that include conservative operating behavior, the executive layer can protect technical teams from short-horizon pressure that would otherwise undermine risk posture. This chapter therefore positions executive governance as the first control surface for responsible scaling.

The thesis further commits to decision transparency across internal and external stakeholders. When model behavior changes, reviewers should be able to identify what changed, why it changed, how it was validated, and which claims were affected. This principle reduces ambiguity during release review and during external scrutiny from clinical partners. It also creates a durable institutional memory, so decisions remain understandable even when teams evolve. In practice, executive clarity is expressed through versioned claim maps, chapter-level acceptance gates, and auditable change logs that keep strategic ambition aligned with verifiable evidence.

This chapter is expanded as a deeper methodological narrative around executive thesis governance and strategic safety alignment. The thesis assumes that durable clinical utility emerges only when early-risk signal doctrine and abstain-first safety logic is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how cross-functional claim governance and leadership KPI design should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of strategic overclaim pressure and metric misalignment. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on evidence freshness, boundary compliance, and traceable decision history. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. This chapter's integrity determines whether users encounter trustworthy guidance or confidence-inflated narratives is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

NoDraw outputs early risk signals only; treatment decisions require clinician confirmation and appropriate reference testing.

Acceptance criteria

Key takeaway

NoDraw is framed as an early-risk signal platform with explicit abstain and confirmatory-care doctrine.

Open questions

Chapter 02

This chapter translates existing AstraCBC corpus documents into an implementation-ready gap closure map: what is already specified, what is underspecified, and what evidence artifacts must be generated to support thesis-grade publication and release governance.

Why this chapter exists

Reader question: Which evidence gaps are still material before claims can be trusted?

Readers need to see not just what is written, but where evidence is incomplete or governance is unresolved.

Estimated reading time: 8 min

The internal corpus already defines a robust skeleton: phone-only architecture, quality gates, arbitration logic, risk register intent, and validation trajectory. What it lacks in the product-facing research page is depth of representation. The route currently summarizes outcomes but does not expose assumptions, formulas, benchmark design, or artifact-level traceability needed by technical reviewers.

A gap-first approach is used here because credibility failure in medical software usually arises from omission rather than commission: missing dataset governance, missing subgroup criteria, missing claim boundaries, and missing operational thresholds. The redesign therefore treats documentation as an executable governance layer. If a claim cannot be traced to evidence fields, the claim is considered non-deployable.

Gap closure method

Quantitative anchor

Claim Readiness Score = (Evidence Completeness x Traceability x Reproducibility) / Open Critical GapsA practical heuristic for whether a claim can graduate from concept narrative to externally defensible technical communication.

| Gap class | Observed state | Required closure action |

|---|---|---|

| Dataset disclosure | No public structured dataset card | Publish capture protocol, cohort strata, exclusion criteria |

| Quantitative outputs | No performance metrics in current route | Expose endpoint metrics table and confidence intervals |

| Claim-evidence traceability | Template exists but not rendered in product | Render claim-to-evidence map in research route |

| Operational safety evidence | Risk template present but not surfaced | Publish top hazards, controls, residual posture |

AstraCBC extract gap inventory translated to implementation work

Figure: Gap closure sequence

mermaid

flowchart TD

Gap[Source gap] --> Spec[Data/spec definition]

Spec --> Build[Renderer + evidence block]

Build --> Verify[Consistency and citation checks]

Verify --> Publish[Research chapter release]How source-document gaps translate into concrete productized evidence artifacts.

The extract process distinguishes between missing information and missing governance. Missing information refers to absent metrics, cohort definitions, or protocol specifics. Missing governance refers to unassigned ownership, unclear acceptance thresholds, or no change-control discipline. Both failure modes can independently invalidate claim credibility. A section may look complete narratively while still being non-operational if no owner can verify its assertions. The editorial pass therefore upgrades prose into accountable evidence language, where each statement can be traced to either an internal source artifact or an external framework requirement with a clear implementation consequence.

Gap closure is treated as an iterative systems process rather than a one-time documentation sprint. As chapters mature, new second-order gaps become visible: for example, a complete table may expose missing statistical assumptions, or a clear claim boundary may reveal unsupported UX phrasing elsewhere. The research page is therefore designed to behave like a living dossier. Version tagging, explicit review cadence, and chapter-level acceptance criteria create a mechanism to absorb new evidence and retire outdated assumptions without rewriting foundational doctrine each cycle.

Another key closure action is harmonizing terminology across product, clinical, and regulatory domains. Terms such as risk signal, confidence band, severity grade, and confirmatory path must mean the same thing in UX copy, validation reports, and governance templates. Terminology drift creates hidden ambiguity that can distort trial design, analytics interpretation, and legal review. This pass intentionally normalizes language at chapter level so downstream teams can reuse exact definitions. Controlled vocabulary is treated as a safety control because inconsistent terms frequently lead to inconsistent decisions in distributed programs.

Finally, gap closure includes publication integrity controls. Inline citations are not cosmetic; they force sentence-level accountability and reduce the risk of unsupported narrative escalation. By requiring citations on non-trivial assertions, the page becomes self-auditing: unsupported statements are immediately visible as citation gaps. This improves both internal review speed and external trust. In effect, the editorial system becomes part of the quality system, because documentation defects can be detected and corrected using the same traceability mindset applied to software defects.

A deeper extraction pass should explicitly separate definitional gaps, quantitative gaps, and governance gaps, because each requires different remediation. Definitional gaps are solved by controlled vocabulary and scope clarity. Quantitative gaps require study design, datasets, and metrics. Governance gaps require ownership, timelines, and release controls. Treating them as one generic backlog category obscures progress and creates false completion signals. This chapter now recommends a gap board keyed to evidence criticality: blocker gaps that prevent claim publication, structural gaps that slow review, and enhancement gaps that improve resilience but are not release blockers. That structure improves execution focus while preserving long-term quality.

The extraction process should also include contradiction checks across documents and UI copy. A frequent failure pattern is local correctness with global inconsistency: one chapter states conservative boundaries while another implies near-definitive behavior. Contradictions of this type are high-risk because they are difficult to detect in isolated review. By running systematic contradiction sweeps and linking outcomes to citation IDs, the research route becomes a coherence instrument rather than a content repository. The result is a tighter evidence narrative where reviewers can validate not only detail depth, but also consistency across all decision-critical surfaces.

This chapter is expanded as a deeper methodological narrative around gap taxonomy and source-to-claim traceability. The thesis assumes that durable clinical utility emerges only when assumption extraction and contradiction-resilient terminology control is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how closure workflows for definitional, quantitative, and governance gaps should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of silent omissions that appear complete but remain non-deployable. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on citation-bound sentence accountability and auditable closure status. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. When gap analysis is weak, users are exposed to promises that are not fully supported by evidence is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

Unmapped claims are treated as aspirational and are excluded from release-critical positioning.

Acceptance criteria

Key takeaway

Gap closure is a managed evidence workflow, not a one-time copy update.

Open questions

Chapter 03

Defines intended use, user cohorts, device assumptions, and hard non-claims for a smartphone-only ocular biomarker SaMD deployment profile.

Why this chapter exists

Reader question: Where does the phone-only system work reliably, and where must it abstain?

Scope drift is a major source of overclaim and misinterpretation in medical software products.

Estimated reading time: 8 min

NoDraw is scoped as a software-led triage system that transforms guided ocular capture into early risk labels with uncertainty metadata and escalation pathways. The intended role is decision support for access and triage, not autonomous diagnosis authority. This distinction is central to risk governance, communication strategy, and regulatory framing.

Scope includes smartphone camera acquisition, on-device quality gating, feature extraction, model inference, and rule-based arbitration. Scope excludes any requirement for attachment optics, consumable kits, or specimen collection. Scope also excludes unsupported claims such as universal device parity without qualification and definitive diagnosis without confirmatory evaluation.

Intended-use envelope

Non-claim constraints

The system does not claim direct blood cell counting from camera imagery, nor universal biomarker equivalence against laboratory methods across all contexts.

Quantitative anchor

Deployable Scope = Intended Use - {Unsupported Capture Modes, Unsupported Claims, Unsupported Devices}Scope control is treated as subtraction against failure-prone and non-validated domains.

| Domain | In scope | Out of scope | Reason |

|---|---|---|---|

| Capture region | Conjunctiva, sclera, pupil | Non-validated camera regions | Signal reproducibility and protocol maturity |

| Hardware | Native smartphone camera stack | External lens modules | Strict deployment simplicity and scale |

| Clinical output | Triage signal labels | Definitive treatment directives | Boundary safety and legal posture |

| Fundus without attachments | Research-only high-abstain | Routine production output | Geometry and optical constraints |

In-scope vs out-of-scope scope boundaries

Figure: Scope envelope

Depicts hard boundaries between validated ocular zones and excluded acquisition pathways.

Scope engineering is framed as a risk-reduction instrument, not a feature-limitation compromise. In practical terms, this means selecting clinically meaningful use cases where smartphone optics and protocol controls can produce reproducible input distributions. The thesis rejects broad but weakly substantiated scope expansion in favor of narrower domains with stronger evidence progression. This approach improves eventual scalability because validated scope can be expanded systematically, while over-broad scope typically produces unstable outputs that force reactive rollback and confidence erosion.

The user-facing product promise is intentionally split into two layers: diagnostic orientation and decision boundary. Diagnostic orientation tells users what the system can flag early. Decision boundary tells users what the system cannot finalize independently. Both are required for safe interpretation. If only orientation is shown, users overtrust outputs; if only boundary is shown, users ignore utility. Scope-complete communication therefore pairs capability statements with explicit limitations in the same context block, reducing the cognitive gap between signal receipt and follow-up action.

Device qualification is another core scope boundary. Smartphone-only does not imply device-agnostic parity. Camera pipeline differences can materially alter feature stability, so support matrices are treated as clinical controls rather than marketing constraints. Unsupported devices are not soft-failed; they are hard-gated to prevent unsafe inference under unknown pipeline conditions. This policy allows expansion over time through evidence-backed onboarding of additional device families. The chapter therefore treats compatibility management as part of the intended-use envelope and not a separate operations concern.

Scope also includes temporal boundaries. Outputs are context-bound to the capture episode and do not imply persistent diagnosis state without longitudinal evidence. Where longitudinal mode exists, it modifies confidence and urgency only through predefined rules, not heuristic trend storytelling. This temporal discipline matters because users may infer chronic conclusions from single-session outputs if boundaries are not explicit. The scope framework therefore includes not only what is measured and on which devices, but also when and for how long an interpretation is considered valid.

Scope expansion decisions should be managed through a staged readiness ladder. Stage one establishes physiological plausibility and protocol reliability for a narrow context. Stage two demonstrates analytical stability under device and demographic stratification. Stage three adds clinical utility evidence and operational readiness constraints. Only after all three are complete should scope be widened. This ladder prevents premature market statements and creates explicit criteria for when a use case transitions from exploratory to deployable. It also protects support and clinical teams from absorbing uncertainty that should have been resolved in pre-release evidence work.

An important editorial refinement is describing scope boundaries in user-operational terms, not only technical terms. Users and partners need to know when outputs are expected to be reliable, what capture contexts are unsupported, and what next step is required when uncertainty is high. Scope language should therefore bind engineering constraints to user actions: supported devices, minimum capture conditions, abstain behavior, and confirmatory routing. This makes scope enforcement legible and reduces the gap between internal validation logic and practical field behavior, which is essential for safe adoption in mixed-resource settings.

This chapter is expanded as a deeper methodological narrative around intended-use envelope design and exclusion governance. The thesis assumes that durable clinical utility emerges only when scope-limited physiological claims tied to validated capture contexts is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how device qualification, context gating, and enforceable non-claim behavior should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of scope creep that outpaces validation and destabilizes output reliability. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on readiness ladders linking exploratory hypotheses to deployable statements. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. Scope discipline ensures users receive outputs matched to validated conditions instead of speculative interpretations is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

Any use case outside the validated scope envelope must default to abstain and referral guidance.

Acceptance criteria

Key takeaway

Deployable scope is tightly bounded by validated devices, contexts, and claim limitations.

Open questions

Chapter 04

Maps thesis claims to recognized standards and guidance frameworks required for a credible SaMD lifecycle.

Why this chapter exists

Reader question: How are scientific claims connected to formal quality and regulatory controls?

Evidence without controlled process execution is not enough for medical-grade software credibility.

Estimated reading time: 10 min

A thesis-grade research program must prove not only that outputs can be generated, but that those outputs are governed under a reproducible lifecycle model. For NoDraw, this means pairing evidence narrative with a standards-aligned quality operating system. IMDRF N41 frames evidence categories, ISO 13485 governs QMS mechanics, ISO 14971 governs risk posture, and IEC 62304 governs software controls.

Usability and safety are not post-processing tasks. IEC 62366-1 requirements are integrated directly into capture and escalation flows because most real-world harm in early-stage diagnostic software emerges from misunderstanding and misuse. Eye-directed lighting context is addressed through ISO 15004 and IEC 62471 references for risk framing and control boundaries.

Regulatory-grade AI operations additionally require controlled change mechanisms. FDA PCCP and cybersecurity guidance are used here as operational constraints: model updates must be pre-characterized, validated by change class, and deployed with monitoring and rollback controls. This chapter therefore treats documentation as part of runtime safety.

Artifact chain

Quantitative anchor

Regulatory Readiness = Evidence Quality + Process Compliance + Change Control DisciplineNo single domain can compensate for failure in another.

| Framework | Primary role | Core artifact |

|---|---|---|

| IMDRF N41 | SaMD clinical evaluation | Clinical Evaluation Plan and evidence chain |

| ISO 13485 | Quality management system | Design controls, CAPA, change control |

| ISO 14971 | Risk management | Hazard analysis and residual risk review |

| IEC 62304 | Software lifecycle | Software lifecycle plan, verification matrix |

| IEC 62366-1 | Usability engineering | Critical task analysis and summative report |

| ISO 15004 + IEC 62471 | Eye/light safety context | Illumination risk and exposure controls |

Regulatory and quality framework mapping

Figure: Regulatory traceability map

mermaid

flowchart LR

IMDRF --> CEP

ISO13485 --> QMS[QMS records]

ISO14971 --> RMF[Risk file]

IEC62304 --> SLP[Software lifecycle plan]

IEC62366 --> UE[Usability file]Framework-to-artifact mapping for review readiness.

A rigorous research route must make visible the bridge between scientific argument and controlled process execution. Regulatory frameworks are often cited abstractly; this chapter operationalizes them as concrete deliverables and decision checkpoints. For example, citing ISO 14971 without a maintained hazard-control map adds no real safety value. Likewise, citing IEC 62304 without release-classified verification evidence leaves software claims ungrounded. The editorial expansion therefore translates framework references into artifact obligations, ownership assignments, and review timing so compliance posture is inspectable rather than rhetorical.

The chapter also addresses sequencing across frameworks. QMS controls, risk controls, and software lifecycle controls are interdependent and should be staged coherently. A common failure mode is late-stage risk analysis after architecture choices are already fixed, which limits meaningful mitigation options. In this thesis, risk and usability signals are intended to influence architecture and protocol decisions early, before model and interface contracts harden. This sequencing reduces retrofit risk and supports cleaner traceability when external reviewers request rationale for major design decisions.

Controlled AI updates receive special treatment because model evolution is continuous while regulatory confidence depends on bounded change. The PCCP-inspired approach here defines update classes with associated validation burden and deployment constraints. Minor recalibration, feature pipeline updates, and endpoint logic changes are not treated equivalently. Each change class requires predefined test deltas, drift checks, and rollback preparedness before promotion. This chapter documents that logic so future model improvements can move faster without compromising evidentiary discipline or forcing ad hoc approval cycles.

Cross-jurisdiction privacy and security obligations are integrated into the same governance narrative. Clinical usefulness cannot be separated from lawful data handling and secure software operation. The thesis therefore links privacy doctrine, cybersecurity controls, and clinical governance rather than presenting them as separate compliance tracks. This integrated view matters operationally because incident response, auditability, and user trust often depend on coordinated handling across these domains. A mature SaMD posture requires that data governance decisions be as explicit and reviewable as inference and validation decisions.

A thesis-grade regulatory narrative should expose the complete design-control lineage: user need, design input, design output, verification, validation, and release decision rationale. When this lineage is visible, external reviewers can test whether evidence truly supports intended use. When hidden, compliance statements become difficult to trust even if underlying work exists. This chapter therefore emphasizes artifact architecture as much as standards references. The objective is not broad framework citation volume, but inspectable linkage from requirement to test evidence to residual risk acceptance.

QMS maturity is further defined by response quality under change and incident conditions. A compliant static state is insufficient for mobile software ecosystems where device firmware, camera behavior, and user patterns shift continuously. The program must demonstrate controlled adaptation: impact analysis, gated validation, decision logs, and post-release monitoring aligned to update class. By embedding this dynamic quality model in the chapter, NoDraw frames compliance as a continuous operating discipline, not a one-time documentation milestone prior to launch.

This chapter is expanded as a deeper methodological narrative around design-control lineage and standards-integrated quality operations. The thesis assumes that durable clinical utility emerges only when artifact-linked compliance translation from framework language to executable process is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how coordinated sequencing across QMS, risk, usability, and software lifecycle controls should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of documentation-heavy but action-light compliance postures. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on inspectable requirement-to-test-to-risk traceability under controlled change. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. Strong quality governance improves consistency of safety behavior users experience across releases is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

If process compliance cannot be demonstrated, output performance claims are considered non-deployable.

Acceptance criteria

Key takeaway

Standards references must map to concrete, owned artifacts and release controls.

Open questions

Chapter 05

Defines the practical optical and ergonomic protocol needed for repeatable smartphone-only ocular acquisition.

Why this chapter exists

Reader question: How does NoDraw handle optical instability in real user environments?

Capture quality is upstream of all inference validity and is the first safety-critical system layer.

Estimated reading time: 10 min

Smartphone ocular capture is constrained by ambient variability, glare, motion, and heterogeneous ISP pipelines. Therefore capture quality is treated as a protocol problem first and an ML problem second. The UI must actively stabilize user behavior through framing guides, hold timers, and adaptive feedback rather than passively collecting arbitrary frames.

Ergonomic design has direct analytical consequences: head pose, blink timing, capture distance, and lighting angle materially change signal viability. The product strategy accepts this and encodes ergonomic controls into acquisition flow. In this model, UX design is part of the sensing stack and should be validated with the same rigor as model components.

Capture protocol controls

Quantitative anchor

Capture Quality Index = w1*focus + w2*stability + w3*exposure + w4*(1-glare)Composite quality index used to determine pass or reacquire behavior.

| Control point | Specification target | Failure response |

|---|---|---|

| Frame hold window | 3-5 seconds stable window | Prompt hold-still recapture |

| ROI confidence | >= 0.75 | Reject frame and request recapture |

| Glare contamination | <= 8% ROI pixels | Lighting guidance + reattempt |

| Motion artifact score | <= 0.20 | Recapture with stabilization guidance |

| Exposure clipping | <= 3% clipped channel area | Auto exposure adjust and retake |

Capture protocol quantitative controls

Figure: Capture and ergonomics flow

mermaid

flowchart LR

Guide[Guided framing] --> Hold[Stability hold]

Hold --> QC1[Focus/exposure checks]

QC1 -->|pass| Bundle[Frame bundle accepted]

QC1 -->|fail| Retry[Recapture guidance]Protocol steps from framing to accepted frame bundle.

Capture reliability is framed as a constrained measurement protocol that must survive unconstrained user environments. The design objective is not perfect optical control but reproducible minimum viable signal integrity under practical conditions. This requires instructive UI, adaptive feedback, and deterministic rejection criteria. In editorial terms, the chapter now clarifies that user behavior is an active component of the sensing system: guidance quality, prompt timing, and interaction sequencing can materially affect feature quality and downstream label stability.

Ergonomics are treated as quantitative variables. Distance to camera, head pose variance, blink frequency, and hand tremor each influence usable frame yield. By instrumenting these factors, the product can distinguish recoverable acquisition issues from non-recoverable contexts that require abstain. This distinction has practical impact on support burden and user trust. A system that repeatedly requests recapture without diagnosing cause appears unreliable; a system that explains specific capture constraints and routes decisively improves adherence and perceived reliability.

The chapter further clarifies device heterogeneity strategy. Rather than trying to normalize all pipeline differences out of existence, the program uses a hybrid approach: deterministic preprocessing to reduce variance, capability gating to exclude high-risk devices, and stratified validation to quantify remaining differences. This approach is more defensible than blanket claims because it produces explicit evidence about where the system performs reliably and where additional controls are required. In practice, this supports staged compatibility expansion with measurable risk management.

Lighting context is a recurring challenge and is handled through both protocol and policy. Protocol controls exposure and glare; policy controls interpretation under persistent adverse conditions. The editorial expansion emphasizes that lighting guidance is not merely UX polish. It is a safety-critical component that prevents false certainty. Capture conditions that repeatedly violate glare or clipping limits must route to abstain with clear follow-up direction. This design choice protects users from unstable outputs and keeps system behavior aligned with conservative clinical intent.

Capture engineering should be documented with explicit tolerance budgets for each instability source: illumination variation, focus drift, motion blur, gaze deviation, and occlusion. A tolerance-budget approach helps teams reason about compounding error. For example, a marginally acceptable exposure condition may become unacceptable when combined with motion and glare. Without budget thinking, controls are often tuned in isolation and fail under real-world interaction. This chapter now encourages joint-condition testing and composite QC criteria so protocol resilience is evaluated under realistic capture combinations rather than idealized single-variable scenarios.

Ergonomic protocol design is also expanded as a fairness and throughput issue. Users with tremor, older users, and users in constrained environments may require different prompt pacing and stabilization guidance. If the protocol assumes narrow interaction behavior, abstain rates can cluster inequitably across cohorts. This chapter recommends adaptive guidance windows, structured retry sequencing, and explicit device posture prompts to reduce avoidable acquisition failure. Such measures improve inclusivity while protecting analytical integrity, reinforcing the thesis that capture UX is part of the measurement system itself.

This chapter is expanded as a deeper methodological narrative around capture physics and ergonomic protocol resilience. The thesis assumes that durable clinical utility emerges only when signal-integrity constraints under uncontrolled smartphone environments is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how tolerance-budget testing, adaptive guidance, and cohort-aware capture ergonomics should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of compounded acquisition instability masked by single-variable tuning. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on joint-condition robustness evidence across lighting, motion, and device cohorts. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. Better capture ergonomics reduces frustrating retries and prevents unsafe outputs from unstable scans is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

Frames captured outside protocol constraints must not be used for clinical signal estimation.

Acceptance criteria

Key takeaway

Protocol design, ergonomic guidance, and device gating jointly determine usable signal quality.

Open questions

Chapter 06

Specifies the deterministic QC state machine and preprocessing standardization required before model inference.

Why this chapter exists

Reader question: How do QC and preprocessing prevent unreliable outputs from reaching users?

Most field failures appear as confidence problems but originate in input quality and transformation drift.

Estimated reading time: 9 min

QC is the first formal safety barrier. No model output should be computed until QC verifies that imaging conditions meet minimum validity requirements. This reduces false confidence and constrains model exposure to adversarial or low-signal conditions. In a thesis-grade design, QC thresholds are explicit, testable, and auditable.

Preprocessing standardization minimizes cross-device instability by normalizing resolution, color statistics, temporal sampling, and ROI geometry. The objective is not to erase device differences entirely, but to reduce non-biological variance so model uncertainty reflects biological ambiguity rather than pipeline noise.

QC and preprocessing sequence

Safety doctrine

Abstain is not an error path. It is a designed safety outcome when evidence quality is insufficient for trustworthy inference.

Quantitative anchor

Decision = argmax{Pass, Reacquire, Abstain} under hard safety constraintsThe decision state is selected under deterministic constraints, not purely model confidence.

| State | Trigger condition | User-facing outcome |

|---|---|---|

| Pass | All hard thresholds satisfied | Continue to model stage and show output |

| Reacquire | Recoverable quality failure | Immediate recapture workflow |

| Abstain | Persistent low certainty or non-recoverable condition | No result; confirmatory-care guidance |

| Escalate | High-risk pattern with sufficient certainty | Priority escalation guidance |

QC decision state machine

Figure: QC state machine

mermaid

stateDiagram-v2

[*] --> Capture

Capture --> Pass: quality_ok

Capture --> Reacquire: recoverable_fail

Capture --> Abstain: persistent_low_certainty

Reacquire --> Capture

Pass --> [*]

Abstain --> [*]Decision transitions among pass, reacquire, and abstain outcomes.

Quality control is positioned as the principal boundary between acquisition variability and inference confidence. The expanded prose clarifies that QC thresholds should be empirically tuned using held-out validation data and revisited under post-market drift, not set once and forgotten. Because QC impacts both abstain rate and output reliability, threshold governance is a balancing problem: overly permissive thresholds inflate unsafe outputs, while overly strict thresholds reduce utility through excessive withholding. This chapter frames threshold policy as a measurable operating point, not a static constant.

Preprocessing is treated as a contract layer between capture and model. Any untracked change in color normalization, temporal sampling, or ROI transformation can invalidate prior model calibration. Therefore preprocessing configuration must be versioned and change-controlled with the same rigor as model weights. Editorially, this section now makes explicit that many perceived model regressions are actually upstream preprocessing regressions. Separating these concerns improves troubleshooting speed and reduces unnecessary model retraining triggered by pipeline drift.

The state machine model is expanded to distinguish user-recoverable failures from system-limited failures. Recoverable failures should produce concise corrective instructions and immediate recapture attempts. System-limited failures should terminate with abstain and clear escalation options. This distinction reduces repeated ineffective capture loops and supports better user trust. It also improves analytics, because reacquire-heavy sessions and abstain-heavy sessions imply different remediation paths at product and support levels.

A key editorial addition is observability requirements for QC behavior. The program should monitor per-device and per-context QC failure distributions, not only final output metrics. If a new OS release causes exposure failures to spike in a single device family, the system should detect and mitigate before clinical performance metrics visibly degrade. By placing QC telemetry in the research narrative, this chapter links algorithmic safety directly to real-time operations, reinforcing that preprocessing and QC are living controls.

QC policy should be modeled as a tunable operating frontier rather than a binary pass/fail ideology. Teams should publish target bands for pass rate, reacquire rate, abstain rate, and downstream reliability, then evaluate threshold candidates against this multi-objective profile. This avoids over-optimization of single metrics and enables transparent trade-off decisions. The chapter now emphasizes that QC thresholds are policy decisions informed by data, not purely technical constants. This framing improves cross-functional alignment because clinical and product owners can evaluate policy consequences before release.

Preprocessing governance is further strengthened by requiring transformation-level auditability. Each transformation stage should be reproducible and attributable in logs: color correction mode, ROI crop strategy, temporal smoothing profile, and normalization version. When post-market anomalies appear, teams can then isolate whether variance originated in input conditions, preprocessing, or inference. Without this granularity, root-cause analysis becomes speculative and correction cycles slow. This chapter therefore treats preprocessing transparency as an operational accelerant for safety and reliability maintenance.

This chapter is expanded as a deeper methodological narrative around quality-gate policy engineering and preprocessing traceability. The thesis assumes that durable clinical utility emerges only when operating-frontier optimization between reliability and abstain burden is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how versioned transformation contracts with transformation-level auditability should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of threshold drift and opaque preprocessing regressions. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on policy-level telemetry linking QC decisions to downstream outcome quality. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. Robust QC and preprocessing keep users from receiving confident-looking results that should have been withheld is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

Model inference without QC pass is prohibited in all release modes.

Acceptance criteria

Key takeaway

QC thresholds and preprocessing versions are policy-governed controls, not static technical constants.

Open questions

Chapter 07

Describes a layered inference architecture that combines ensemble modeling with deterministic rules and calibrated uncertainty.

Why this chapter exists

Reader question: How does the model stack decide when to output versus abstain?

Raw model confidence can be unsafe unless constrained by calibrated uncertainty and deterministic policy checks.

Estimated reading time: 10 min

A single-model architecture is insufficient for this problem class due to device heterogeneity and context variability. The adopted strategy uses endpoint ensembles and a deterministic rules arbiter to reduce brittle behavior. The arbiter is intentionally conservative: contradictory or low-certainty outputs route to abstain rather than optimistic interpretation.

Uncertainty calibration is treated as a release-critical component. Confidence bands are not cosmetic metadata; they are gating signals that determine whether an output is actionable, advisory, or withheld. Severity mapping is only allowed when confidence exceeds minimum calibrated floors, preventing false urgency from unstable estimates.

Arbiter responsibilities

Quantitative anchor

Final Score = Arbiter(Ensemble Scores, Rule Constraints, Uncertainty Calibration)Arbiter output is policy-constrained, not merely probabilistic.

| Layer | Function | Safety guardrail |

|---|---|---|

| Feature backbone | Extract temporal/spatial ocular features | Version-locked preprocessing |

| Endpoint ensemble | Generate endpoint-specific probabilities | Cross-model consistency checks |

| Rules arbiter | Apply deterministic safety constraints | Blocks contradictory unsafe outputs |

| Uncertainty module | Calibrated confidence scoring | Triggers abstain when below threshold |

| Severity mapper | Map to urgency grade | Requires minimum confidence floor |

Model stack and arbitration contract

Figure: Rules + ML arbiter

mermaid

flowchart LR

Features --> EnsembleA[Model A]

Features --> EnsembleB[Model B]

Features --> EnsembleC[Model C]

EnsembleA --> Arbiter

EnsembleB --> Arbiter

EnsembleC --> Arbiter

Rules[Safety rules] --> Arbiter

Arbiter --> Decision[Label + confidence OR abstain]Parallel model outputs reconciled by deterministic safety rules.

The ML architecture is expanded here as a hierarchy of obligations rather than a collection of models. Endpoint ensembles must produce stable probability surfaces, but those probabilities alone do not authorize output. The arbiter enforces deterministic safety logic across model disagreement, uncertainty calibration, and policy constraints. This distinction is critical in medical software, where high raw model confidence can still be unsafe if generated from degraded input conditions or out-of-distribution context. The chapter therefore positions arbitration as a governance layer over probabilistic inference.

Calibration quality is elevated to release-critical status. Confidence bands must reflect empirical reliability, not cosmetic ranking. Poorly calibrated confidence creates dangerous UI semantics, because users and clinicians may interpret high-confidence labels as high-validity labels even when failure rates remain unacceptable in certain cohorts. The editorial pass emphasizes per-stratum calibration review and periodic recalibration under drift surveillance. Confidence is treated as an evidentiary claim that must be continuously defended with fresh data.

Trade-off management is made explicit: improving sensitivity through lower thresholds can increase false positives and escalation load; raising certainty thresholds can suppress risky outputs but increase abstain frequency. The system must optimize these trade-offs against intended-use priorities and operational capacity. This chapter adds language to ensure threshold changes are discussed as multi-stakeholder decisions involving clinical risk appetite, support readiness, and regulatory implications rather than isolated model-team tuning choices.

Update governance for model and rule bundles is also expanded. A model improvement that changes feature salience or class calibration may require corresponding arbiter updates and new user-facing explanations. Decoupled updates can create latent inconsistencies where policy assumptions no longer match model behavior. By explicitly documenting co-versioning expectations and regression burdens, this chapter reduces the risk of silent misalignment between ML output behavior and safety policy logic in production.

A deeper arbitration treatment requires explicit disagreement doctrine for ensemble outputs. When endpoint models diverge materially, the system should not silently average confidence. Instead, it should trigger rule-level conflict handling that evaluates uncertainty calibration, QC confidence context, and boundary constraints before rendering output. This prevents false stability from numerical aggregation and aligns result behavior with safety intent. The chapter now recommends logging disagreement classes as first-class telemetry, enabling targeted refinement of model and rule interactions where conflicts recur.

Model governance is also expanded around lifecycle comparability. New model versions should be evaluated not only for global metric gains but for behavioral continuity across high-risk cohorts and decision thresholds. A model that improves average AUC but worsens calibration in critical strata may be clinically inferior for intended use. This chapter therefore advocates release criteria that combine discrimination, calibration, abstain impact, and subgroup stability. Such multi-axis evaluation keeps optimization aligned with safe clinical utility rather than leaderboard-style metric improvement.

This chapter is expanded as a deeper methodological narrative around ensemble modeling, arbitration logic, and calibrated uncertainty. The thesis assumes that durable clinical utility emerges only when disagreement-aware inference governance with confidence calibration controls is translated into reproducible operating behavior, rather than left as a conceptual claim. That translation requires explicit mechanism descriptions, controlled vocabulary, and measurable constraints that can survive real deployment noise. A major editorial objective in this pass is to make hidden assumptions visible so expert reviewers can challenge them before they propagate into release posture. The chapter therefore emphasizes not just what the system intends to do, but why the chosen framing is technically defensible under smartphone-only limits, heterogeneous devices, and variable field conditions. By making chapter intent inspectable at this level, the document moves closer to thesis-grade rigor and reduces interpretation ambiguity across engineering, clinical, and regulatory readers.

At execution level, the expanded prose details how co-versioned model-rule release discipline and conflict telemetry should be implemented as a controlled workflow instead of an ad hoc optimization effort. This includes defining preconditions, measurable outputs, failure states, and owner accountability for every critical transition. The chapter now treats process clarity as a safety property, because unclear operational boundaries usually manifest as delayed incident detection, inconsistent escalations, and policy drift over time. It also clarifies that quality outcomes must be interpreted through stratified telemetry rather than aggregate averages, especially in mobile ecosystems where device cohorts can behave differently under identical logic. These additions help teams reason about practical deployment consequences before release and ensure that chapter guidance is actionable in production, not merely persuasive in documentation.

From a risk perspective, this section further expands the treatment of numerical confidence that is not aligned with real-world reliability. The goal is not to enumerate generic hazards, but to define causal pathways that can be monitored, mitigated, and re-evaluated as software and environment conditions evolve. The editorial pass stresses that conservative behavior must be designed into the system architecture, communication layer, and review process simultaneously. If any one of these control surfaces weakens, the safety profile can degrade even while nominal model metrics appear stable. To prevent that failure mode, the chapter now reinforces explicit boundary language, threshold governance discipline, and predefined corrective-action triggers. This style of writing is intentionally procedural: it allows reviewers to infer how teams should act when evidence becomes contradictory or when operating assumptions no longer hold in the field.

The final long-form paragraph in this chapter links local detail to global program credibility by focusing on multi-axis release criteria across discrimination, calibration, and subgroup stability. A thesis-level artifact must show that chapter claims can be defended repeatedly across release cycles, not only at publication time. For this reason, the text now highlights versioning discipline, evidence refresh cadence, and cross-chapter consistency checks as mandatory controls. Users benefit when uncertain or conflicting model states are handled transparently rather than averaged into false certainty is used as the practical consequence lens: if this chapter is implemented well, users receive clearer and safer outcomes; if implemented poorly, uncertainty is obscured and risk is transferred silently to downstream care pathways. By adding this integration layer, the chapter becomes a decision instrument for technical leadership rather than a static reference section, and it aligns the page with long-horizon evidence governance expectations.

Boundary Statement

Any endpoint without calibrated uncertainty and arbiter constraints is non-deployable.

Acceptance criteria

Key takeaway

Arbitration is the safety governor over ensemble predictions, conflicts, and boundary conditions.

Open questions

Chapter 08

Defines data acquisition, labeling windows, stratification, and dataset governance required for defensible model development.

Why this chapter exists

Reader question: Is the dataset built to support fair and defensible claims?

Data distribution and label integrity define practical claim limits more than architecture alone.

Estimated reading time: 9 min